the generalist is about to eat the specialist alive

LLMs compressed expertise. the moat around deep specialization is thinner than ever. the future belongs to fast generalists with good taste. here's why i believe that and how i build.

in the next few years, being a generalist will probably give you better chances of making it big than being an ultra-specialist in one domain.

i know. bold claim. let me back it up.

LLMs are already compressing a big chunk of technical expertise. the knowledge gap between "i've never used this framework" and "i can ship something decent in it caus i did it in this other one" is 80% gone imo, and the moat around deep specialization is not it anymore.

the specialist spent years mastering one tool. the generalist spent years connecting ten domains and now AI hands them passable fluency in each one overnight.

this became more obvious to me over the last few months with gpt 5.4 and opus 4.6, as I used both every day.

the email that made me share this

this week someone emailed me after i closed their GitHub issue on foxguard.

i was impressed by the speed at which you processed the issue i posted. seeing how fast you crunch tickets, i imagine you vibe code. and seeing the quality on foxguard, i'm curious about your process.

fair question. and super happy to share what my monkey brain discovered as i'm experimenting w this stuff.

the claude code leak showed that it's not something magical in the tool. i operate as a generalist who moves fast across domains; security research, infrastructure, product, even music production. AI agents handle the mechanical depth in each one.

46 PRs of AI slop in a day?

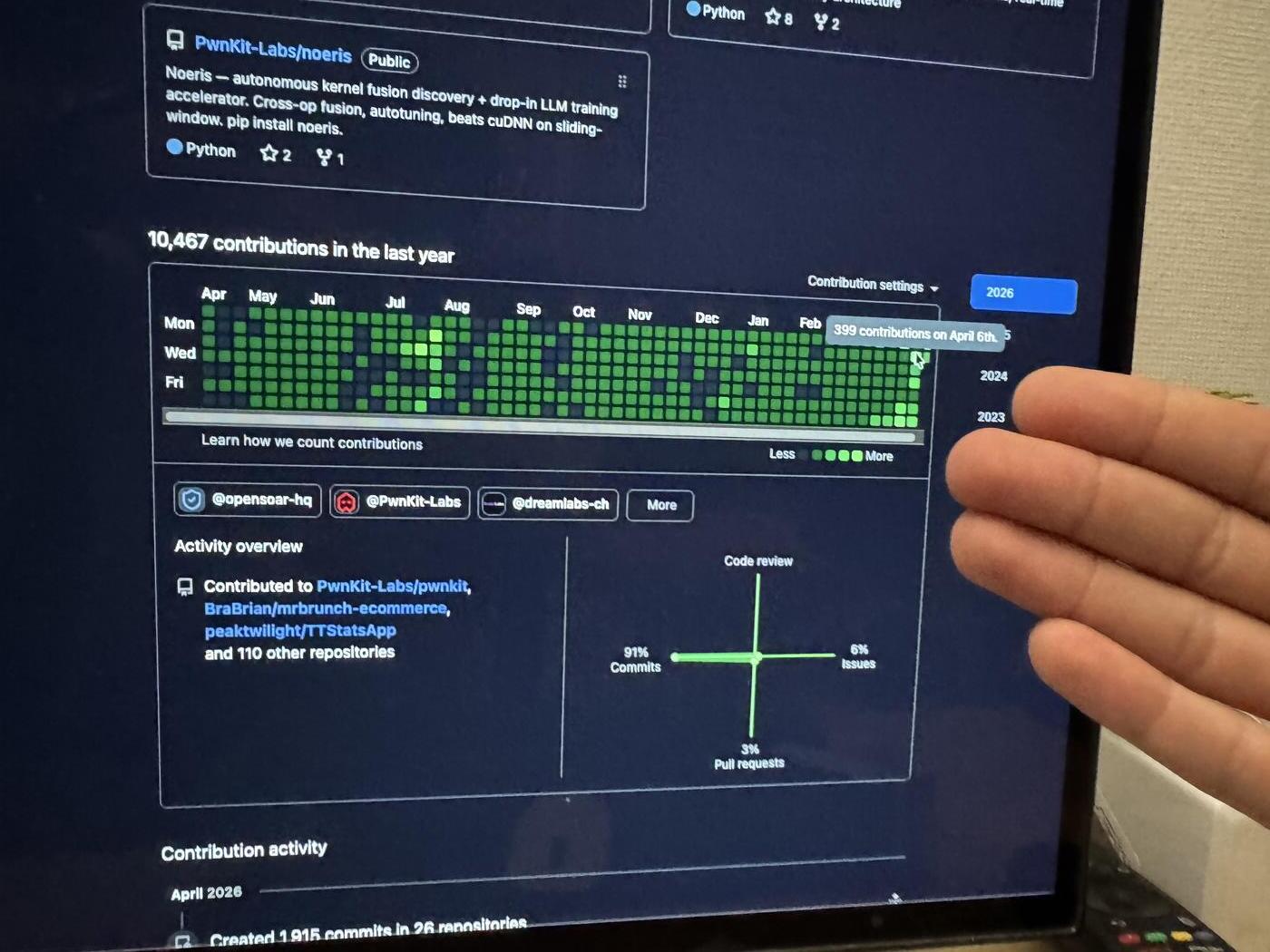

i merged 46 PRs in one day and it got noticed. cross-file taint analysis, a new language scanner, refactors, precision fixes, all in parallel. Is it all fully clean code? Probably not, I would get roasted by some rust guys... But is it above average compared to a bunch of open-source and even entreprise level software I touch at my 9-5 job? oh absolutely lol

how? sub-agents and context is all you need (for those who get the reference).

the key insight is that most work in a codebase is more independent than people think. if two tasks touch different files and different logic, they can run in parallel. (obvious i know, but i hear a lot of talk and not a lot of "do" on twitter...) so i launch multiple agent sessions simultaneously, one per independent workstream.

one agent is writing the new scanner. another is fixing edge cases in the existing parser. a third is refactoring the output format. they don't touch the same files, so they don't conflict. now obviously you gotta know the product you're building and understand a minimum of programming concepts to make this work.

the commits and merges are my checkpoints. each PR is somewhat small, focused, independently reviewable. when it looks right, it ships. no batching, no waiting, no "i'll review all of these later." NO! caus when that happens, the agents are so fast to write code that things get our of hand and they diverge that much faster. sure you could fix a merge conflict, but why procrastinate the thing and add more work for later??

also, 46 PRs sounds insane. but each one is a clean, atomic unit of work. it's not 46 massive changes. it's 46 focused changes that happened to run in parallel.

this is generalism in action. i'm not the world's best static analysis engineer. i'm definitely not a good rust developer. but i understand enough about security, parsing, and software architecture to direct agents across all of it simultaneously, and ship something that works.

why this is happening now

the gap between "i have an idea" and "i have working code" is almost gone. LLMs compressed it (at least for people who code like me). for a certain class of problems that are well-scoped, technical, with clear success criteria > going from thought to code in minutes is now realistic.

this changes everything about how specialization works.

the old world: you need deep expertise to ship anything decent. the specialist wins because they can do things the generalist simply can't.

the new world: you need deep expertise to ship something great. but "decent" is now accessible to anyone who can direct an agent well. and "decent, shipped today" beats "perfect, shipped never" almost every time.

i work with almost zero specs. yup didn't I say i'd get roasted?

no PRDs. no architecture docs written before coding starts. (sure sometimes claude or codex in plan mode, but that's about it) no design meetings like at my day job. the process is: intuition becomes prototype, prototype becomes test, test becomes merge. usually same day, sometimes same morning.

this sounds reckless, but i think it's the opposite.

specs are insurance against uncertainty. if you don't know what you're building, a spec helps you figure it out. but if you can prototype faster than you can write a spec, the prototype is the spec. it's a spec that actually runs, that you can test, that you can show to someone and get real feedback on.

so why would i spend an hour writing a document describing what the code should do, when i can spend that hour writing the code and then see if it works and iterate in that same amount of time?

i'm not saying specs are always wrong. sure large teams need alignment artifacts. cross-org work needs contracts. but for a solo builder or a small team moving fast (which a bunch of huge 1 billion+ valued unicorns are right now), the spec is often the most expensive part of the process — and also a lovely gift to us startup founders to compete with the big guys :D

the generalist's toolkit

i use Claude Code (the CLI, not the desktop app) as my primary tool with the max plan. when i need to parallelize or get a second opinion on code, i switch to Codex CLI or OpenCode. i've also been testing Paperclip AI which is interesting for coordination.

always CLI. almost never GUI for coding.

it's a speed thing for me. My take is that GUIs are designed for ease of adoption and broader audiences. CLIs are designed for execution for us nerds. when you already know what you want, the GUI is overhead.

but i'll say it AGAAin and AGaiiin: the tools matter less than ever.

i've been using LLMs since GPT-2. before ChatGPT. before the AI hype. building intuition for what / how to interact w it, how to structure context, when to give the agent autonomy versus when to intervene.

this sounds vague. let me make it concrete.

knowing what to ask — the difference between "fix this bug" and "the regex on line 47 of parser.ts fails when the input contains nested brackets caus the capture group doesn't account for depth. fix the regex, don't change the architecture" is the difference between a 20-minute mess (that i see when my friends try claude code's adhd work for the first time) and a 30-second fix.

structuring context — agents work better when they know the shape of the problem. "here's the file, here's the failing test, here's the expected output, here's my theory about why it's broken" will almost always end up with better results than "it doesn't work, pls fix". It's like you're briefing a colleague who just walked into the room, but that colleague has a phd but is slightly drunk and overly excited by your project.

knowing when to intervene — sometimes you let the agent run. sometimes you stop it after 10 seconds because you can see it's heading in the wrong direction. this "vibe" is a feel thing. it develops over thousands of hours of using something like claude code and codex. there is no shortcut (yet i think?).

the people i see struggling with AI tools are usually struggling with one of these three. they either ask the wrong question, give insufficient context, or let the agent run too long on a bad trajectory.

and this is the generalist advantage. these skills transfer across every domain. the person who can prompt well in security can prompt well in frontend can prompt well in infrastructure. the meta-skill is domain-agnostic.

what actually differentiates now

so if LLMs are compressing expertise, what's left?

judgment. knowing what to build, not just how to build it. knowing when the tool is wrong. knowing when the architecture will collapse in six months.

execution speed. the distance between "idea" and "shipped" is where most value lives now. not in the idea itself. not in the code itself. in the speed of the loop.

taste. the ability to look at something and know it's not right, even if you can't immediately articulate why. this is human. this is not compressible. "THE VIBES"

connecting domains. security + infrastructure + product thinking + music production + business strategy. the more domains you can bridge, the more non-obvious solutions you can see. AI makes each domain more accessible. the human advantage is in the connections between them.

none of these are specialist skills. all of them are generalist skills.

the specialist asks: "how do i go deeper?"

the generalist asks: "how do i connect these three things nobody else sees are related?"

in a world where AI handles the depth, the connector wins.

what i'm building with this

i'm building a company around it.

pwnkit orchestrates AI agents for offensive security — automated vulnerability discovery with blind verification. it's the tool i used to find 7 CVEs in npm packages with 40M+ weekly downloads. foxguard is the static analysis piece.

the core bet: AI agents will transform security testing the same way they're transforming development. more aggressive, more systematic, more reproducible. for web apps, LLM systems, code, and packages. the attack surface is growing faster than human auditors can cover it.

building this required security research, CLI tooling, agent orchestration, product design, open-source community building, and business strategy. no single specialist could have built it. a generalist with good agents could — and did.

foxguard recently hit the Hacker News front page. which is what triggered the email that triggered this post. the circle completes.

the uncomfortable truth

specialists will still matter, deeply, in specific contexts. the person who spent 10 years on kernel internals will still be irreplaceable for kernel work. the cryptographer will still be irreplaceable (probably) for cryptography.

but the median valuable contributor? that's shifting.

the median valuable contributor will increasingly be someone who can move across boundaries rather than someone who went deeper than anyone else in a single well.

the future belongs to fast generalists with good taste and strong judgment. i hear this from my yc and builder friends ALL the time "taste this", "taste that".. i was like wth are they talking about, but now i get it...

the specialist digs the well. the generalist builds the aqueduct connecting all the wells together.

AI just made the aqueduct a lot cheaper to build.

someone asked me my process. my process is: have an idea at 9am, ship it by lunch, and deal with the consequences by dinner. the LLMs just made the lunch deadline realistic.