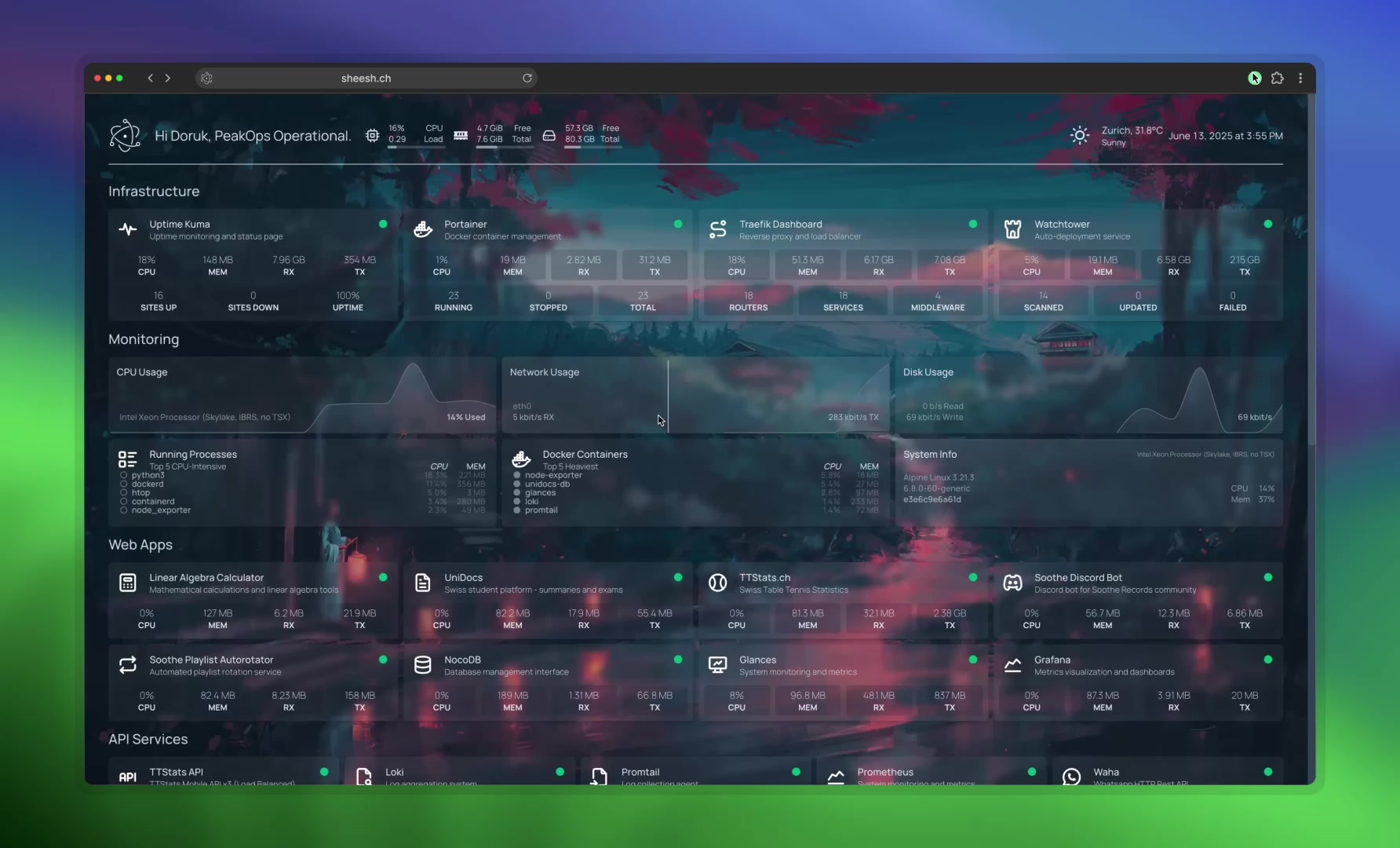

i run more self-hosted services than some Swiss startups

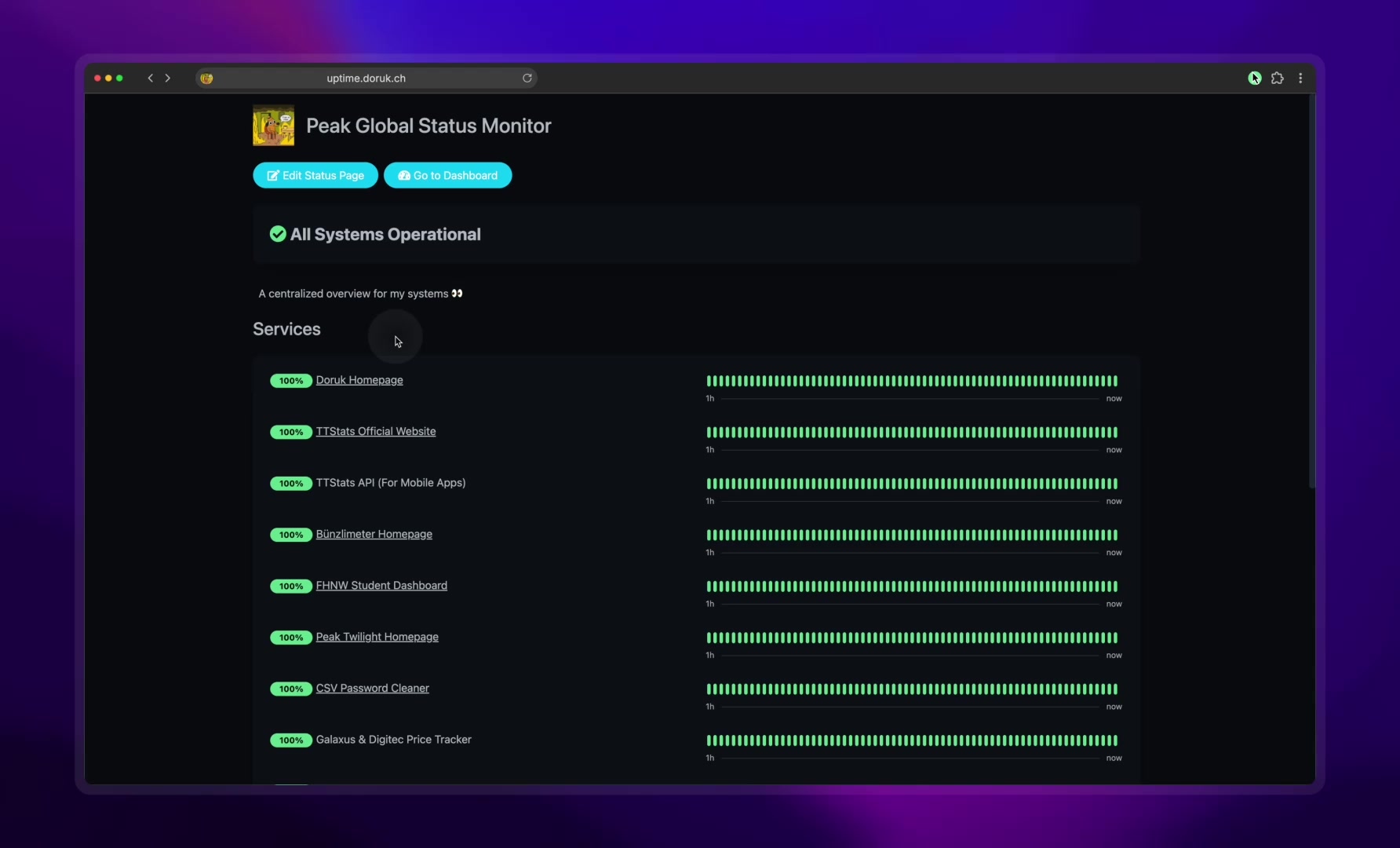

Some people collect sneakers. I collect uptime percentages. 99.9% availability on services nobody asked for.

some people collect sneakers. i collect uptime percentages.

99.9% availability on services nobody asked for. 25+ self-hosted services, all monitored, all containerized, all running on hardware in my apartment. welcome to my homelab addiction.

the stack

here's what's running right now:

monitoring & observability

- uptime kuma — the backbone. monitors every service, every endpoint, every API. i'm also a contributor to the project (and recently found a security vulnerability in it, which is a whole other story). public status page at status.doruk.ch

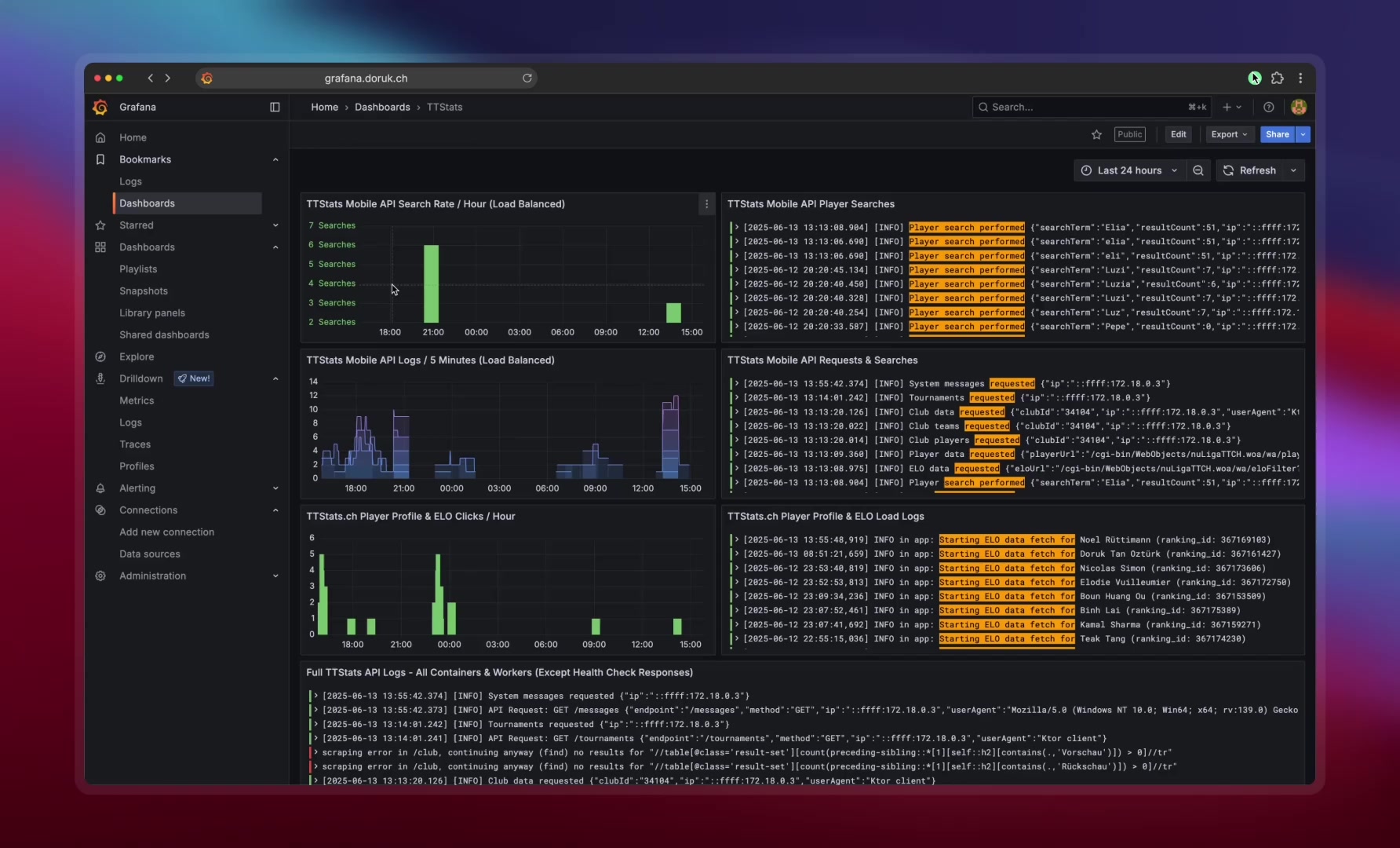

- grafana — dashboards for everything. API response times, resource usage, traffic patterns. if it produces metrics, it gets a dashboard

container management

- portainer — web UI for managing docker containers. yes i could do everything from the CLI. but sometimes you want to see all your containers in one place without typing

docker psfor the hundredth time - watchtower — automatic container updates. pulls new images, recreates containers, sends notifications. set it and forget it

networking

- traefik — reverse proxy and automatic SSL. every service gets its own subdomain and a valid certificate. traefik watches docker labels and configures routing automatically. no manual nginx configs, no certbot cron jobs

data & productivity

- nocodb — self-hosted airtable. i use it for project tracking, bug databases, and the occasional spreadsheet that's too complex for google sheets but doesn't need a real database

- plus a bunch of other services i won't list because operational security exists

why self-host?

speed of deployment. i can go from "i want to try this tool" to "it's running in production with SSL and monitoring" in under 10 minutes. no sign-up forms. no credit cards. no waiting for provisioning. just docker-compose up -d and a traefik label.

learning by breaking. my homelab has taught me more about networking, DNS, TLS, containers, reverse proxies, and linux administration than any course. because when something breaks at 2am, you don't have a support team — you have stack overflow and determination.

control. my data lives on my hardware, in my country. no terms of service changes. no sudden pricing increases. no "we're pivoting and shutting down this product" emails.

it's fun. there, i said it. running infrastructure is genuinely enjoyable when it's your own playground. nobody's paging you (except you, because you set up alerting). nobody's writing postmortems (except you, because you started a notes file for your own incidents).

the 2am incidents

every homelab operator has stories. here are some of mine.

the time watchtower auto-updated a database container and the new version had an incompatible data format. at 2:47am i got a notification that my monitoring dashboard was down. which was ironic because the monitoring system that sent the notification was also partially down. spent 45 minutes rolling back. lesson learned: pin your database versions.

the time i accidentally let a TLS certificate expire on a saturday. everything still worked over HTTP, but traefik was configured to redirect everything to HTTPS. so every service returned a certificate error. technically 100% uptime. practically 0% usability.

the time a power outage lasted exactly long enough for my UPS to die but not long enough for me to notice. came home to find everything had been down for 6 hours. my phone had 47 "service down" notifications. the status page showed a beautiful cliff where all the green lines turned red simultaneously.

what's next

i'm currently migrating parts of the stack to kubernetes. not because docker-compose isn't working — it's great for a homelab. but because i want to learn k8s properly, and the best way to learn something is to run it in production and deal with the consequences.

the goal is a hybrid setup: simple services stay on docker-compose, stateful workloads and anything that benefits from orchestration moves to k8s. rolling updates, self-healing, proper secret management, declarative everything.

will it be overkill? absolutely. will i learn a ton? also absolutely.

the honest truth

running a homelab is a hobby that disguises itself as productivity. you tell yourself "i'm learning valuable infrastructure skills" (true) and "this saves money compared to cloud services" (debatable) and "i need all 25 of these services" (absolutely false).

but it's also genuinely one of the best ways to learn. every incident is a lesson. every new service is a mini-project. and there's something deeply satisfying about checking your status page and seeing a wall of green uptime bars for services you built, deployed, and maintained yourself.

99.9% availability on services nobody asked for. and i wouldn't have it any other way.